Book Announcement: Why a Few Teams Keep Shipping AI That Matters

Architected Intelligence is Now Available for Purchase

Some teams have brilliant people, great team dynamics, endless budgets, and executive attention, but they either can’t get their AI initiatives out of the lab or their users simply don’t care about them. Other teams with fewer resources keep shipping useful things. Imperfect but valuable things. Things that actually change how work gets done.

That performance gap is what drove Jeremy Mumford and me to write Architected Intelligence: Principles for Building AI-First Organizations and Technologies, published by Wiley, and now available for purchase.

We are aware of the irony of publishing a physical book about the most transformational technology since the printing press. We have made peace with it.

It takes a certain amount of hubris to write a book. We know the AI community didn’t need one more AI title with a glowing robot head on the cover and a subtitle boldly claiming that we’re “unlocking tomorrow” with a “blueprint of the future.” We wrote Architected Intelligence because over the last few years we kept seeing the same modes and patterns of failure and a recurring single framework for success.

Before we dive into the patterns of success, we’re certain you recognize the failures. Product teams bolted on AI summaries with a nice ✨emoji and called it innovation, while the technical teams built unscalable but impressive demos destined for the proof-of-concept graveyard. Leadership would then get frustrated as they see the wild progress on social media and make a decision to “shake things up.” However, instead of a new bold “AI strategy,” what these companies really needed was a way to think clearly about value, data, models, trust, and organizational execution as one connected system.

These companies needed a structure to architect with artificial intelligence.

The Chaotic Early Days

“The art of progress is to preserve order amid change and to preserve change amid order.” — Alfred North Whitehead

Think back to when ChatGPT launched on November 30, 2022 and the internet promptly lost its mind in the ensuing months. We were in the trenches at Pattern, navigating a high-growth ecommerce environment attempting to shape billions in revenue while our feet were being swept away in the AI tsunami.

What followed was a strange period (in retrospect) with an odd combination of enormous promise, small context windows, frequent model outages, pricey API calls, and groundbreaking but still limited practical intelligence. The technology was historic, but we all were struggling to turn it into something that mattered and created something valuable. The early constraints turned out to be useful because they forced discipline.

The traditional siloed disciplines were failing the AI transformation. In one corner, software engineering didn’t know how to handle the non-deterministic nature of LLMs, and in the other corner data science was too slow and opaque for the “move fast and break things” pace of generative AI, and knowledge management wasn’t found in any corner at all because the discipline was mostly forgotten in an “agile” world.

We needed a unified theory.

A Framework Born in the Trenches

As we worked with the first wave of generative AI tools, we found ourselves pulled into a recurring set of five questions.

What exactly are we trying to produce?

What data is actually needed to make that output valuable?

What model behavior matters for this use case?

How do we know whether the system is trustworthy in production?

What is the path to end the “wave of experiments” and begin to scale?

Those questions eventually solidified into a simple interdisciplinary framework. It drew from software engineering, data science, data engineering, product thinking, and knowledge management. It was useful and gave us a way to build and communicate real AI products and processes. The result was a multidisciplinary approach that we call Architected Intelligence.

We further built out our framework with the builders and thinkers we admired. The Andrej Karpathy and Ethan Mollicks of the world, along with the research posted by the leading AI labs and the individual researchers and engineers posting publicly from within them. We tested these concepts in the messier places where ideas either become useful or get exposed quickly: internal planning, system design, conference talks, consulting engagements, vendor discussions, customer conversations, AI meetups, roadmap fights, and postmortems. That broader feedback loop mattered.

While we received strong encouragement and saw positive initial results, we also received sharp criticisms from smart people, further refining our frameworks and principles. To us, the tipping point was when people more brilliant than us could take our structure, adapt and apply it to their own domain, and suddenly move faster. It helped teams break down what had felt like a giant, foggy AI ambition into concrete parts they could actually reason about, communicate, and build.

That was the moment we started taking the idea of a book more seriously, so we partnered with the Wiley team to bring you a book and lay out this framework for you.

The Five Components of Architected Intelligence

Successful AI products and processes consistently execute on five components, in order:

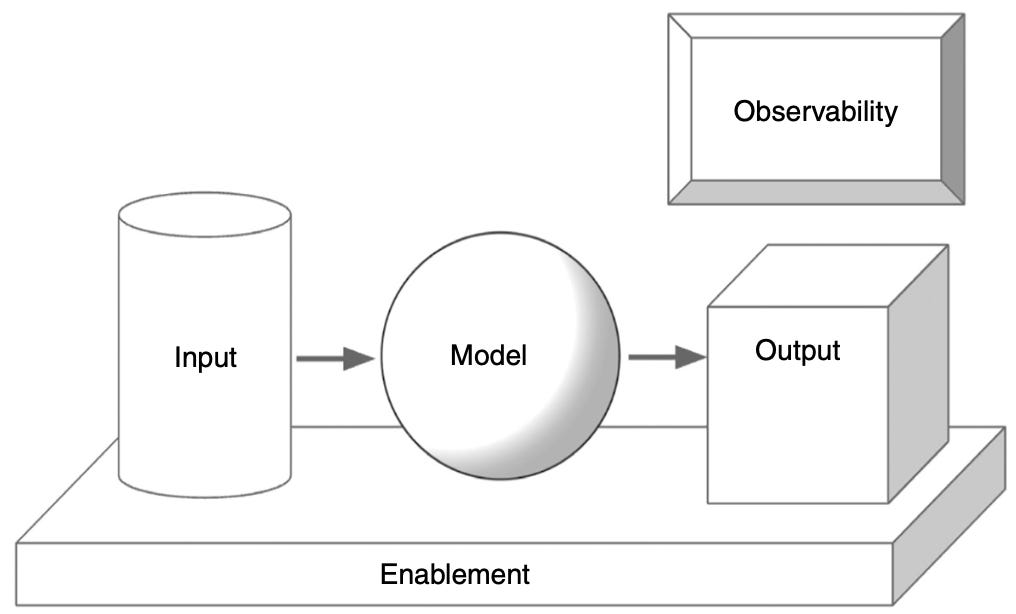

Output — In a world where execution gets cheaper, judgment compounds. What valuable experience are you designing? What is the exact value that each AI call is responsible for? Every downstream technical decision traces back to this.

Input — Power the system with high-quality, “canon” knowledge and differentiated data delivered in the moment it’s needed. This is the AI system’s fuel. Without unique data, your brilliance will be copied and commoditized.

Model — The model that you are leveraging is the engine and NOT the vehicle. Context engineering, fine-tuning, model selection, and cost-latency-intelligence tradeoffs are where technical decisions get made, but only after you’ve defined the output and prepared the input.

Observability — Trust is the currency of AI adoption, and it’s fragile. You need to know what your system is doing, why it’s doing it, and whether that remains acceptable as conditions change. Traditional software observability and data science evaluation each solve half the problem. You need both.

Enablement — The graveyard of AI is full of great prototypes that never scaled. Enablement is the infrastructure, talent, culture, and process that transforms successful experiments into durable organizational capability.

These five components form the structure of the entire book. Each stands on its own and you can open to the chapter most relevant to your immediate problem and apply it. We believe, however, that the real payoff comes from the full picture when you see and understand how a failure in Input undermines a perfectly engineered Model and how gaps in Observability silently destroy trust in an otherwise valuable product.

A Book Built to Last

We wrote the book we wish we’d had as we built our AI platform, products, and processes. It’s grounded in what successful AI projects have in common, whether they were built on GPT-3.5 or on models that didn’t exist six months ago. The principles that govern how you define valuable output, acquire and curate data, configure models, maintain observability, and build enabling infrastructure don’t change when the underlying models do. That’s the bet we made, and we think it holds.

Who This Is For

The primary audience is technical leaders. This includes:

engineering leaders responsible for delivering AI projects that actually work,

technical advisors helping organizations navigate transformation

technology leaders charting the map between their AI ambitions and their team’s current ability to execute

However, we also wrote it so that product leaders and others working close to AI could follow the arc and develop the core intuition for what quality AI projects require. If your roadmap is reliant on blind trust and crossed fingers and you’re looking for a remedy, this book was written for you.

For the Builders

In the end, this book is for the people who we believe will inherit the AI-first future. The builders. The ones who eschew the hype cycles and simply want to create something beautiful and valuable with this extraordinary mass of matrix algebra, computation, and data that has ushered in a new era.

That’s the spirit behind both this newsletter, The Why Behind AI, and our book Architected Intelligence. We hope this newsletter and this book help you architect yours.

Architected Intelligence: Principles for Building AI-First Organizations and Technologies by Jacob Miller and Jeremy Mumford is available now. Published by Wiley, 2026.