Don't Build "Trustworthy" AI

Everyone says they want trust in AI. Almost no one means the same thing.

In 2023, not long after the advent of ChatGPT, I sat in a roundtable discussion on AI with people from academia, business, government, and the media. In other words, a room full of people professionally trained to use the same words differently.

Everyone was confident about where AI was going, which was impressive because despite being in the top 99.9Xth percentile of earliest accounts created on the OpenAI platform, I was still testing and discovering the frontier of ChatGPT’s capabilities. (At the time it was mediocre wedding toasts and confident-sounding legal citations.)

The topic of trust in AI then came up. Everyone agreed trust was VERY important, which was true, but it was also the moment I realized the word trustworthy in the context of AI is not useful. As the conversation continued, it became clear that people were not actually talking about the same thing. Some people meant transparency. Could we understand how the AI reached its answer? Others meant accuracy. Did it get the answer right? Others meant calibration. When the AI sounded confident, was that confidence deserved? Still others meant alignment. Did the system behave according to human goals, or, as one person wondered, were the robots coming for us all?

Everyone was talking past each other, and all of them were using the word trustworthy. That was when I realized “trust” had become a suitcase word, and we needed to stop using the word trust on our team.

Suitcase Words

Marvin Minsky, one of the foundational figures in artificial intelligence, used the term “suitcase word” for words that carry many meanings packed inside them. In his book The Emotion Machine, from all the way back in 2006, Minsky used the idea for overloaded concepts, including:

consciousness

emotion

intelligence

etc.

These are words that sound like they have a singular definition, but every person packs their own meanings, processes, assumptions, and arguments inside them. The suitcase can then be used to bludgeon your ideological opponents.

One of my favorite descriptions of this phenomenon is from a book I read when I was 12 years old:

“When I use a word,” Humpty Dumpty said, in rather a scornful tone, “it means just what I choose it to mean—neither more nor less.”

“The question is,” said Alice, “whether you can make words mean so many different things.”

“The question is,” said Humpty Dumpty, “which is to be master—that’s all.”

— Through the Looking-Glass, and What Alice Found There, by Lewis Carroll

In the roundtable meeting, trust sounded like a “very important” and “shared concern,” but it was really a suitcase. Everyone had packed their own meaning inside it, zipped it shut, and started debating assuming everyone was holding the same bag.

Trust is a tricky concept. A system can be accurate but poorly calibrated. It can be transparent but inaccurate. It can be aligned with company policy but miserable for users. What are the conditions for “trustworthy” and are they dependent on the context?

This is why we use the TACA framework of trust: Transparency, Accuracy, Calibration, and Alignment. Once you unpack trust into TACA, meetings become a little less inspirational and a lot more useful, which is a trade-off I am personally willing to make.

TACA Replaces Trust

Trust is easier to achieve once you unpack the suitcase

TACA separates the internal dimensions of AI trust into four components:

Transparency: Can users sufficiently understand how the system reached its answer?

Accuracy: Does the system produce correct or useful outputs according to the objective?

Calibration: Does the system’s expressed confidence match how likely it is to be right?

Alignment: Does the system behave according to the goals, constraints, and values of the people who create, use, or are affected by it?

Improving one can leave another unchanged, and in some cases improving one can make another worse.

A typical AI trust conversation can sound something like this:

Product Manager: “Users don’t trust the AI system. We’re losing clients.”

AI Engineer: “The system is 99% accurate. It’s better than it has ever been.”

Product Manager: “We’re still losing customers. The system isn’t trustworthy.”

AI Engineer: “But look at the data! They’re all up and to the right!”

The engineer may be right that accuracy improved. The product manager may be right that they’re losing users. This combination is possible because the two of them are not talking about the same component of trust.

The PM may be talking about calibration and the system might give high-confidence answers in situations where uncertainty would be more appropriate. Or the PM may be talking about alignment and the system might be optimizing for a company policy that technically makes sense but feels adversarial to users. Or maybe the problem is transparency and users may not understand where an answer came from, so they refuse to rely on it even when it is correct.

Now compare that to the same conversation using TACA:

Product Manager: “The AI isn’t sufficiently calibrated for our users. It expresses high confidence on complex, high-stakes topics where users should be cautious.”

AI Engineer: “That makes sense. The latest model improved accuracy, but calibration may have dropped. We can test confidence language, escalation thresholds, and uncertainty signaling.”

The team can now investigate a specific failure mode and decide whether the use case requires better calibration, where the system should hedge, when it should escalate, and whether a small reduction in raw accuracy is worth a better user experience.

In other words, TACA turns the argument from “is it trustworthy?” into “which part of trust is failing, how much does that part matter here, and what are we willing to trade to improve it?”

Although this post won’t cover the full depth of TACA (consult our book), below are a few key attributes to keep in mind for each.

Transparency – Can users see enough to judge the answer?

Transparency, along with any other element of TACA, may not be required for your use case. If your users take a picture of a bird and the AI correctly determines its species 99% of the time, do you or your users truly care how it came to the answer?

In other contexts, transparency is essential. This is especially the case when the stakes are high, the consequences are public, or the environment is regulated. If an AI system recommends a hiring decision, medical action, insurance denial, legal interpretation, or significant financial move, “the model said so” is not going to carry the room, nor should it. A transparent system gives stakeholders something to inspect, challenge, verify, and improve.

This is also where many teams confuse explanation with performance. A clear explanation does not make an answer correct. Transparency is not trust by itself, but it does provide visibility needed to evaluate whether trust is warranted.

Accuracy – Did it get the right answer, and what does “right” mean?

Accuracy sounds like the simplest part of trust. Did the model get the answer right? Wonderful. Put it on the dashboard and give it a nice green color.

Unfortunately, accuracy is only simple when the cost of every error is the same. “The model is 99% accurate” is comforting until you learn after the fact that in the remaining 1% your CFO just bet the company’s future.

Accuracy is the correspondence between the AI system’s outputs and the intended objective, but the intended objective has to be defined. Different users often care about different failures. A model that is highly precise on average but occasionally misses wildly may be preferred by someone managing huge workflows and seeking upside, but it would be rejected out of hand by someone else managing risk.

A system that performs well across a broad benchmark may still be unacceptable if its errors cluster around sensitive, expensive, or legally exposed cases.

A bird-species classifier can often be measured with straightforward correctness: did it identify the bird in the photo? Even then, the answer may depend on whether confusing two common sparrows matters less than missing an endangered species.

The technical term for this is that the loss function does not match the utility function. In plainer language, the metric the team can easily measure is not always the outcome the user actually cares about. This is why teams need to define accuracy around the use case, the user, and the cost of different errors. Otherwise they optimize what is simple to report rather than what actually improves the outcome.

Calibration – Does the system know when it might be wrong?

Calibration is the alignment between a model’s expressed confidence and the actual likelihood that the output is correct. A well-calibrated system that says something is 70% likely should be right about 70% of the time in comparable situations. When it sounds uncertain, uncertainty should be appropriate. When it sounds confident, that confidence should be earned.

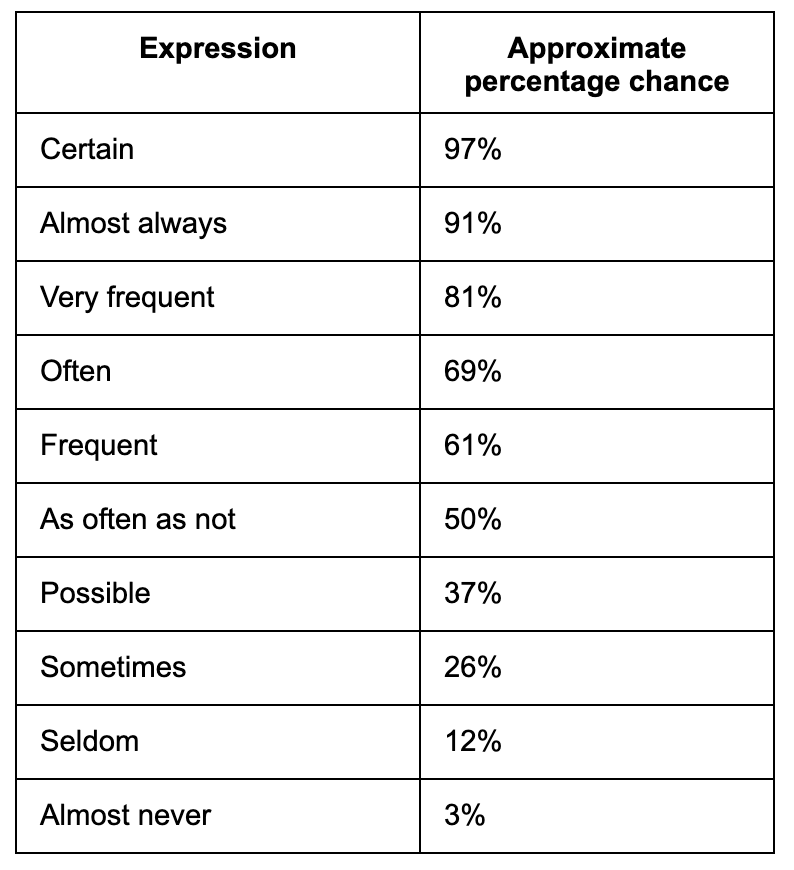

This matters because users adjust their reliance based on perceived certainty, whether the system gives a numeric probability or just uses confident language. Humans naturally convert words into rough probabilities. “Possible” feels different from “often.” “Almost always” feels different from “sometimes.” “Certain” feels like it should have brought a lawyer.

My toddler nephew once explained to me with the utmost confidence how income taxes worked. A poorly calibrated AI acts similarly.

One of my favorite papers is Mosteller and Youtz’s 1990 paper “Quantifying Probabilistic Expressions.” People interpret words as probability signals, even when the speaker, or model, is using them loosely. Below is a snapshot of their research showing how people translated expressions to probabilities.

How well does it match your probabilities? Are you a “often = 80%” kind of person or closer to 60%?

That table is useful because it shows how much meaning humans attach to ordinary uncertainty language. If an AI system says it is “certain” but is right 80% of the time, users will experience that as failure even if the underlying accuracy is objectively impressive.

Calibration becomes especially important in high-stakes or high-ambiguity domains. A legal AI assistant that presents a precedent even though the case was overturned years earlier is dangerous in a different way than a system that says, “This appears relevant, but I have low confidence and recommend verification.”

Alignment: Does the system behave according to the right values and constraints?

Alignment is the extent to which an AI system’s behavior reflects the expectations, goals, constraints, and values of the people who create, use, or are affected by it.

This sounds straightforward until you remember stakeholders include users, executives, regulators, lawyers, customers, and at least one person whose explicit stated purpose is “but how can we make this REALLY go viral?”

Alignment is also not the same as giving the user whatever they ask for. A child-facing AI application should not behave like an unrestricted general assistant. A car dealership AI assistant can refuse to help a random user write a browser extension that shuffles cat videos. A medical assistant or financial assistant must obey constraints that go beyond immediate user preference.

Alignment is where the implicit values become explicit. What does the AI prioritize when goals conflict? Does it favor user autonomy, company efficiency, regulatory caution, safety, growth, retention, speed, politeness, or escalation avoidance? Which users are protected? Which risks are tolerated? Which trade-offs are documented, and which ones are accidentally embedded because nobody wanted to have a difficult meeting?

The bias can never be eliminated, but it can be chosen, documented, tested, and managed. In this respect, AI systems resemble the humans who design and deploy them, which is either comforting or upsetting, depending on your experience.

Alignment is ultimately a negotiation between technical performance and multiple sets of human values, and it changes as strategies, markets, laws, and cultures change. A system can be transparent, accurate, and calibrated, yet still rejected if users believe it is serving the wrong master.

Quality is the weighted combination of TACA

Once trust is unpacked, AI quality becomes easier to discuss. Quality is not simply a universal knob that teams can turn from 7 to 8. Quality is the weighted combination of Transparency, Accuracy, Calibration, and Alignment for a specific use case.

Not every AI system needs the same trust, or “TACA,” profile.

A bird classifier may care mostly about accuracy. Users want to know whether the photo shows a Shoebill Stork or a different bird that looks just as judgmental. Transparency may be helpful, but for many users it is secondary. Calibration may matter if the system shows confidence or offers multiple possible species. Alignment matters less, unless the system is being used for conservation, hunting restrictions, or ecological reporting.

Your AI model has failed to impress the Shoebill Stork

In our work we disaggregate the portion of trust that matters. In our day-to-day Accuracy is, as expected, the most common, but the other elements also factor in as needed. For example, one of our company’s values is “partner obsessed,” where we do not take an action that will benefit the company to the detriment of a brand partner. This alignment ethos is also embedded in how AI is designed.

Without TACA, “trustworthy” becomes a suitcase word packed with conflicting meanings. Unpack the suitcase early. Otherwise the team will spend the next six months arguing about trust while carrying different luggage.

To learn more about TACA and other principles to bring AI to your tech and company, read our book Architected Intelligence: Principles for Building AI-First Organizations and Technologies.

![Source?" "I Made It Up" Highest Quality Version on the Internet [Dr. Manhattan from Watchmen] : r/MemeRestoration Source?" "I Made It Up" Highest Quality Version on the Internet [Dr. Manhattan from Watchmen] : r/MemeRestoration](https://substackcdn.com/image/fetch/$s_!Zg1g!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F10f4c3ad-d655-4476-8fce-1dcb0fd2aed7_1666x2087.png)