Star Wars AI Cybersecurity Theory: Why the Future May Have Terrible Wi-Fi

And practical advice to deal with increasingly powerful attackers

Architected Intelligence: Principles for Building AI-First Organizations and Technologies by Jacob Miller and Jeremy Mumford is available now. Published by Wiley, 2026.

The original Star Wars trilogy provides us with a plausible theory of the AI cybersecurity endgame:

offense wins, and everyone responds by making technology worse on purpose

That sounds backwards at first. Star Wars has faster-than-light travel, planet-destroying weapons, sentient droids, holograms, and, most importantly, laser swords. It also has astonishingly bad interoperability. Droids physically plug into wall terminals, sensitive Jedi archives require physical retrieval, and critical infrastructure (the center of the plot!) is protected by shields, then blast doors, and then an isolated generator.

Our world, in many ways, is more connected than Star Wars. My watch talks to my phone. My phone talks to my car. My car wants to talk to the cloud for… reasons(?) I do not fully trust. More connection = more technological progress. But, maybe, that only works when connection is mostly safe.

In Star Wars, R2-D2 rolls up to a Death Star terminal, plugs in, chirps for a few seconds, and appears to gain administrative access to the Empire’s most sensitive systems. He bypassed those Imperial CAPTCHAs no problem. Just one brave little astromech and a maintenance port on “dangerously skip permissions mode” is all that’s needed.

Did the Empire build a moon-sized battle station and forget to secure the USB port? Or does the cyber world start to look like Star Wars?

Just like Lord Vader, we also recommend purchasing our book Architected Intelligence, today!

That is the actual issue underneath AI and cybersecurity. We should not ask whether AI helps attackers or defenders. It obviously helps both. The useful question is which side compounds faster.

More AI means much more code.

More AI means more cybersecurity.

More AI means more cybercrime.

So which side wins in the cyber security offense vs. defense battle once AI is added?

(Cyber Crime + Code Quantity) vs. Security

A different offense vs. defense perspective is to examine the “Hacker ROI” of a breach:

Probability of Breach * Expected Value of Breach > Cost of Attack

The optimistic story is AI helps defenders review code, find vulnerabilities, monitor logs, triage alerts, write patches, summarize incidents, and automate responses. A security team with good AI support can move faster and cover more ground. Modern environments are too large, too messy, and too interconnected for humans to inspect by hand.

The pessimistic story is just as easy to see. AI helps attackers generate better phishing, personalize scams, scan more systems, chain together tools, summarize documentation, write malware variants, test hypotheses, and TIRELESSLY keep trying. Cybersecurity is a race between two optimization processes.

Modern AI agents are becoming more R2-like and can keep going longer with less supervision. OpenAI’s just-released GPT-5.5 reached 78.7% on OSWorld-Verified, a benchmark for operating real computer environments. It’s better at using tools, checking its work, and persisting through complex tasks.

Cybersecurity can be like a spacesuit, where a tiny puncture can become catastrophic because the environment outside is hostile. A company can have a solid security team, reasonable policies, and sound execution, but the attack can come from every seam.

Like: … phishing email, fake vendor invoice, compromised SaaS account, stolen session token, malicious browser extension, leaked GitHub secret, over-permissioned service account, vulnerable dependency, package typo-squat, forgotten staging environment, old VPN appliance, contractor laptops, shadow IT tools, “help desk” resets, exposed API keys, “helpful” AI agents with malicious instructions…

After the 1984 IRA bombing of the United Kingdom’s Conservative Party conference in Brighton—which targeted, but failed, to take out the Prime Minister—the IRA issued a statement saying, “Today we were unlucky, but remember we only have to be lucky once. You will have to be lucky always.”

The attacker does not need to understand your company. The attacker needs to “get lucky” on one seam.

Historically, a lot of practical cybersecurity depended on friction to raise the cost of hacking to win the ROI equation. If your security was annoying enough for attackers, you could deter them.

It is the old bear joke. Two people are running from a bear. One stops to put on running shoes. The other says, “You’ll never outrun the bear.” The first says, “I don’t have to outrun the bear. I only have to outrun you.”

“Make yourself harder to attack than the next company” used to be sound advice, but AI changes the cost curve.

Why Is Airgap The End Point of Offense Winning?

Security typically falls along a few dimensions. You gain access to a system because of:

Something you know (a password or pattern)

Something you have (a phone, hardware token, keycard, or private key)

Something you are (a fingerprint, face, or voice)

Somewhere you’re located, like a trusted device, secure facility, local terminal, office network, or controlled region.

If AI offense obliterates the first three through hacks, social engineering, and other methods, that leaves us with the fourth—our location.

Maybe these plot points don’t sound quite so ridiculous now!

Instead of relying on plain old galaxy-wide SSO, the Death Star plans in Rogue One are in a physical archive on Scarif, accessed through a mechanical retrieval system, and then can only be transmitted through a physical tower after a military assault.

The entire Trade Federation droid army’s server was centralized to one orbiting space station, minimizing the interfaces that could infect it, but maximizing the effect of a well aimed laser.

Maybe Not Either / Or

Joe Reis recently posted a useful security-related prediction in his Mythos-related piece. The rough idea was that organizations with access to cutting-edge cyber models could defend themselves, while organizations without those models could not. This is the in-between scenario of offense vs. defense.

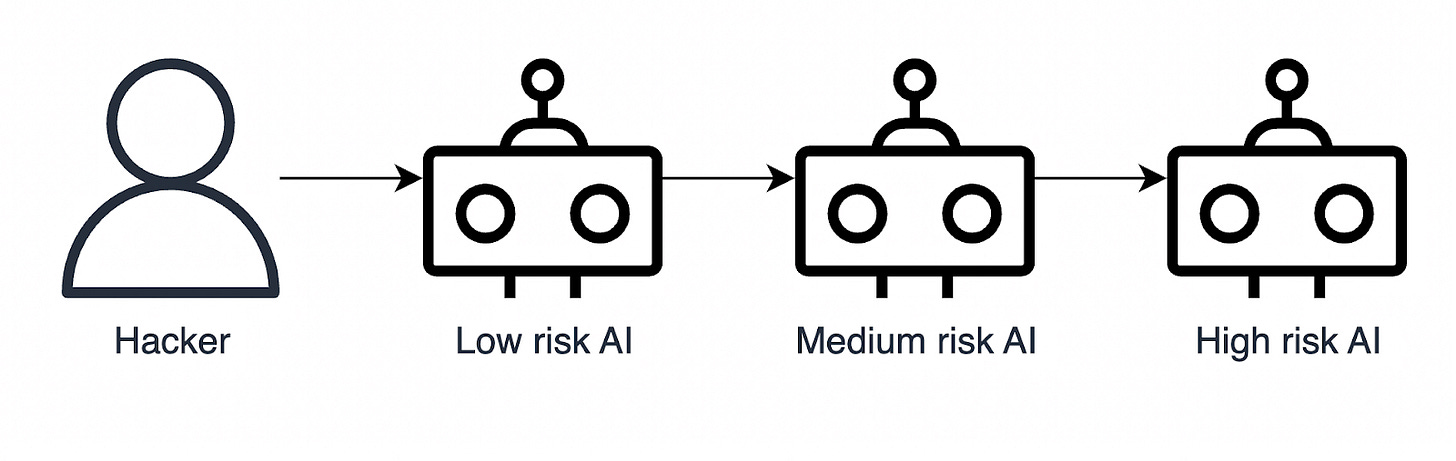

However, attackers do not need to beat the best-defended organization directly if they can walk through the weakest connected partner.

Most of us have discovered that much of the value of AI stems from its interconnectedness with applications across an ecosystem. Even an “in-between” scenario, can quickly result in a world of walled gardens, greatly limiting AI’s impact, but perhaps not achieving the full extent of a world of Star Wars-like airgaps.

Practical Advice for Today

With all that being said, we aren’t in a battle among Jedi and Sith. We live in the here and now. What can be done today? Here’s a synthesis of practical best practices we have collected from those in AI security.

A system is as vulnerable as its weakest component. Strengthen those weak points, create barriers between system, or cut them off entirely.

If an AI agent has access to a system or piece of information, assume that anyone who can access that AI agent has access to the same.

Any context that AI pulls from can be used for exploitation. Online “best collections of AI skills and AI connectors” are especially rampant with vulnerabilities.

Update your applications with recent upstream versions… BUT NOT TOO FAST. Every old vulnerability can be exploited, but every dependency can be supply chain attacked, and that most likely surfaces in the most recent versions, like LiteLLM or Axios or … .

The bottom line: This makes for interesting futurecasting, but the immediate action is to take security seriously today, without going full Star Wars airgapped mode, which could also destroy your organization, just more slowly.

Architected Intelligence: Principles for Building AI-First Organizations and Technologies by Jacob Miller and Jeremy Mumford is available now. Published by Wiley, 2026.