The Near-Future of White-Collar Work

And Why Software Engineering is Ground Zero

Looking for practical guidance and frameworks to transform organizations and technologies with AI? Our new book Architected Intelligence is now available for pre-order!

There has been significant recent hype around the latest AI models and the impending disruption across the economy.

Very few, however, walk through the variables that determine the blast radius. Fewer still provide a playbook to integrate the new technology stack across white-collar work. This article does both: what determines the level of disruption AI causes in a given domain, and the critical moves to incorporate it, whatever its degree within your space.

The Landscape

Is everything crashing down, or up, or sideways? Is your job or industry ripe for disruption?

Narrow professions, or segments of professions, have already been disrupted by AI. But software engineering is ground zero for the first major, widespread disruption across a large industry. It provides the lessons to apply everywhere else.

The immediate impact of AI is lowering the cost of doing. This means the competitive bottleneck shifts away from raw execution and toward the disciplines that determine whether execution becomes value:

a) choosing the right outcomes,

b) translating those outcomes into real constraints, and

c) learning faster than the competition through iteration and implementation.

This reframing matters because velocity is increasing, but not in a simple “everyone ships more” way. In a world with tight feedback loops, speed tends to win, especially when aimed at deeper user understanding.

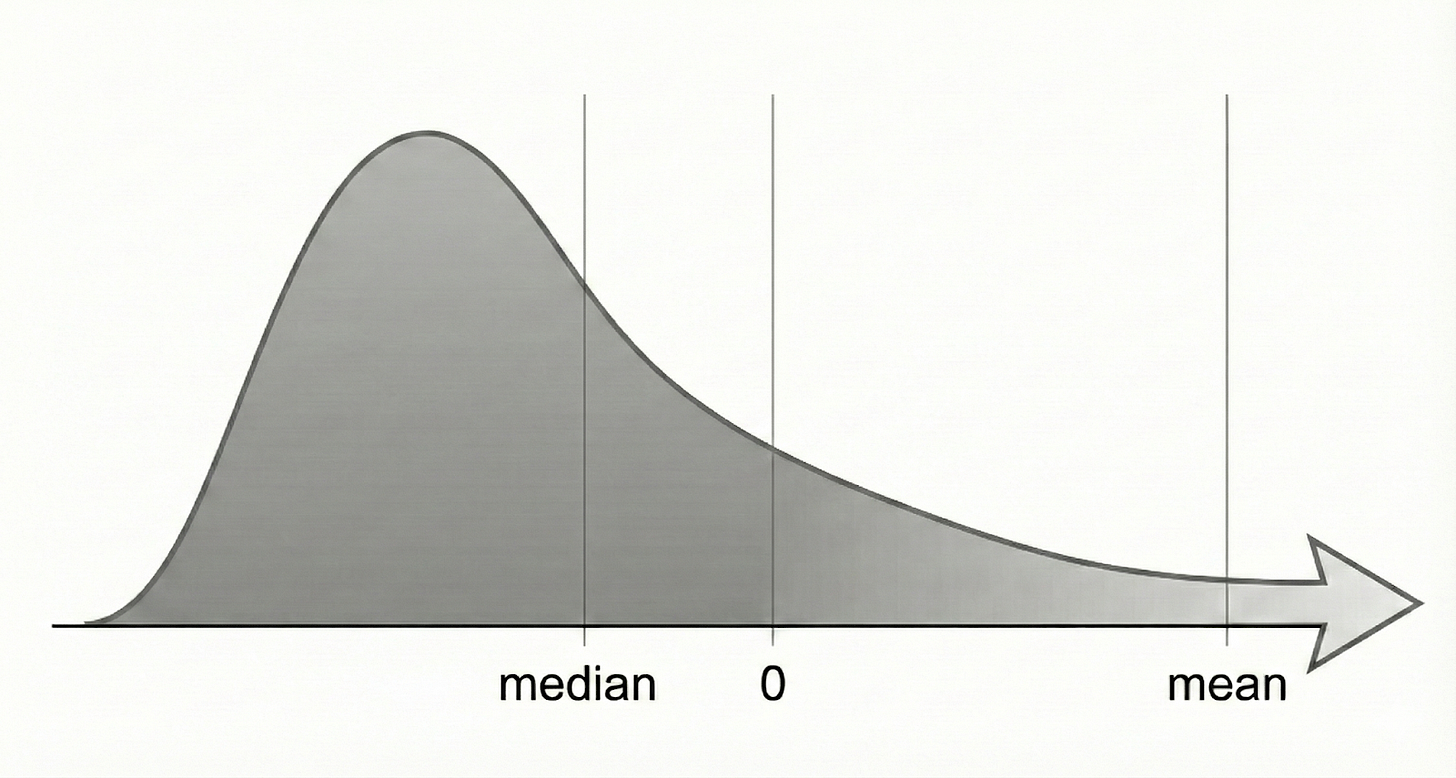

Faster building also changes the economics of experimentation. The median ROI across technology products is negative, while the average ROI is shockingly high because a small number of outliers create enormous value. As AI makes building easier, we expect that pattern to intensify. More teams will run more experiments, more will fail, and the median will fall. But the winners will iterate faster, compound learning sooner, and capture more value, pulling the average higher. Velocity becomes a “fail fast” superpower when paired with disciplined feedback and a clear definition of what good looks like.

The New Roles in an AI-First World

As roles evolve, traditional boundaries blur. The classic separation between functions begins to break down. In software engineering, small teams are already outpacing larger ones and outperforming more credentialed ones.

A comparison that makes this easy to grasp is a restaurant where a robot does the cooking. Someone decides what’s on the menu and what a great meal feels like (Direction), someone designs the kitchen so it can actually produce that meal reliably at scale (Architecture), and someone tastes everything and figures out what needs fixing (Evaluation). While the robot does most of the cooking, the other three roles become more important.

These three roles have become the bottlenecks to creating value.

Direction: the ability to precisely define a valuable end-to-end experience.

Architecture: the ability to translate that experience into constraints, interfaces, and data relationships that create reliable systems.

Evaluation: the ability to measure, debug, and learn through testing, verification, and user feedback.

Crucially, these are NOT necessarily three job titles or even departments. These are three categories of responsibility that will blend within individuals and teams in some companies, but also scale into specialized teams in larger organizations.

Direction

Direction is the discipline of deciding what is worth building and what “good” should feel like when it is built… in detail and within the realm of feasibility of our new, highly capable systems. This is not “an ideas person” waving their hands at science fiction. Understanding the capabilities and the “jagged edges” of AI is a prerequisite to doing Direction well.

The core artifact is a set of vivid user stories detailing walkthroughs of the user experience that are far more specific than most teams produce today. These stories capture the complete experience: how the user discovers the feature, how it behaves under normal use, what happens when something goes wrong, and, crucially, what the user trusts the system to do on their behalf.

Metrics still matter, but they rarely encode the full complexity (or welcome simplicity) of a user experience. Direction includes look and feel, tone and clarity of system responses, workflow structure, and the evidence behind the stories: user research, support tickets, win-loss notes, market signals, and qualitative insight.

A Direction function should be able to answer, with precision: Who is this for? What are they trying to accomplish? What does success feel like? What should the user never experience? When Direction is done well, teams share a definition of a great experience and understand why it creates value.

Architecture

Architecture translates Direction into a reliable system. Once Direction defines the desired experience, Architecture defines the technical realities required to deliver it. This includes: performance requirements like latency, uptime, and graceful degradation; safety requirements around privacy, security, and compliance; and the constraints that keep teams from accidentally breaking things as they move fast.

Architecture consists of the technically demanding job of defining requirements and constraints. AI can propose designs and generate code, but Architecture must recognize where AI remains weak. In software engineering some of its weakest areas include data and infrastructure complexity. These areas still require deeper technical expertise and will continue to do so for at least a couple years.

Architecture should make it easy to build quickly, now and in the future, without causing severe breakages. It specifies what must be true for the system to behave the way Direction intends, even under load, when dependencies fail, or when new features are added. When Architecture executes well, builders can move fast because they are operating inside a set of constraints and interfaces that prevent accidental catastrophes. It becomes much easier to parallelize work across teams (human and AI) because the system contracts are explicit.

Evaluation

We will never “one-shot” perfection. Evaluation provides the feedback loop for continuous iteration. It is how the organization learns whether the system is actually delivering the experience defined in Direction, within the constraints defined by Architecture.

The speed at which our AI restaurant dishes can be expertly tasted is a primary determinant of success.

Besides speed, a mature Evaluation function distinguishes between:

Bugs that violate expectations

Quality issues that could be improved

Feature requests that expand scope

Each requires a different response. That triage discipline is a core element of Evaluation. It becomes a primary source of competitive advantage. “Move fast, but don’t break anything essential” is now a genuine choice.

Variables of AI Disruption for White-Collar Work

This flow of Direction, Architecture, Evaluation will quickly begin to transform most white-collar work. Software engineering is a special case because it lines up almost perfectly with AI’s current capabilities. Other fields will require more adjustment, but the framework still applies.

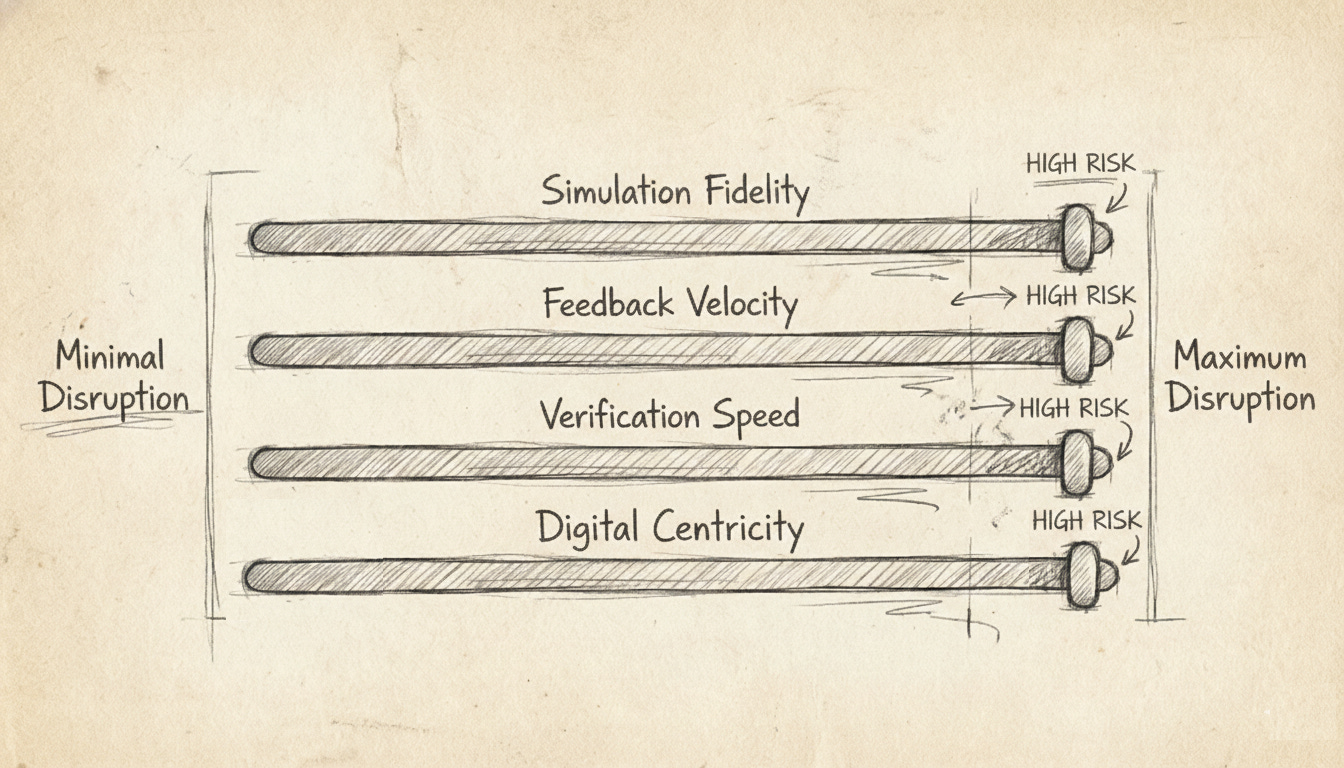

What determines how fast AI comes for a given domain? We identify four spectrums, in order of importance.

Fidelity to Simulation

In 2020, Google DeepMind’s AlphaFold stunned the scientific world by solving protein structure prediction. In a matter of months, a single team with relatively limited experience in the field solved a critical area of basic research that had stumped biologists for fifty years. The team combined novel AI methods with the ability to run simulations at a scale no human researcher could match.

On the other hand, to date, no AI can predict with high accuracy whether a given protein will survive FDA trials for human use. One step further downstream, and the AI hits a wall because we still lack a sufficiently faithful model of how the human body actually responds to medicine.

The deep question at play is: Can the AI check its own work? Can it simulate reality with enough fidelity to know whether it solved the problem, or whether it should keep trying?

Where the answer is yes, the AI transformation will be swift. Software engineering sits at the far end of this spectrum because an AI can attempt thousands of solutions and simulate the final outcomes with nothing but processing cost. Physics, mathematics, and many engineering disciplines share this property.

Wherever there are high fidelity simulations, AI will dominate faster than most expect.

Reversibility + Feedback Velocity

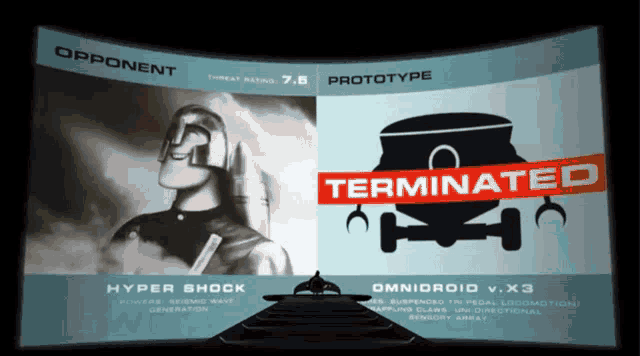

In The Incredibles, the villain [redacted for spoilers] solves his superhero problem with ruthless… inefficiency. He lures heroes to a remote island, unleashes the latest robot, and lets the fight play out. Robot loses? Upgrade it. Repeat until the robot wins… lethally.

Super villains are often not efficient in their A/B testing

We don’t have the time, resources, or morals to run experiments that way. The relevant question for AI’s potential impact in a domain is: Is this a two-way door?

Jeff Bezos’s two-way door heuristic: if a decision is easily reversible, just try it. For our purposes in AI, we also need tight feedback loops. It needs to be both reversible and visible.

Can we quickly tell if the AI goes wrong, and reverse it with little lasting damage? If yes, AI will move quickly.

Consider an AI proposing changes to a website’s checkout flow: move the cart icon and reposition the purchase button. Rather than debating, run an A/B test. A thousand users get the old experience, a thousand get the new one. Add-to-cart rates tell you everything you need to know within a week.

Where high-fidelity simulation isn’t possible, AI will dominate where there is real behavior, low stakes, fast signals, and easy rollbacks.

Bill O’Reilly also recognizes the importance of AI’s capabilities to perform live experiments.

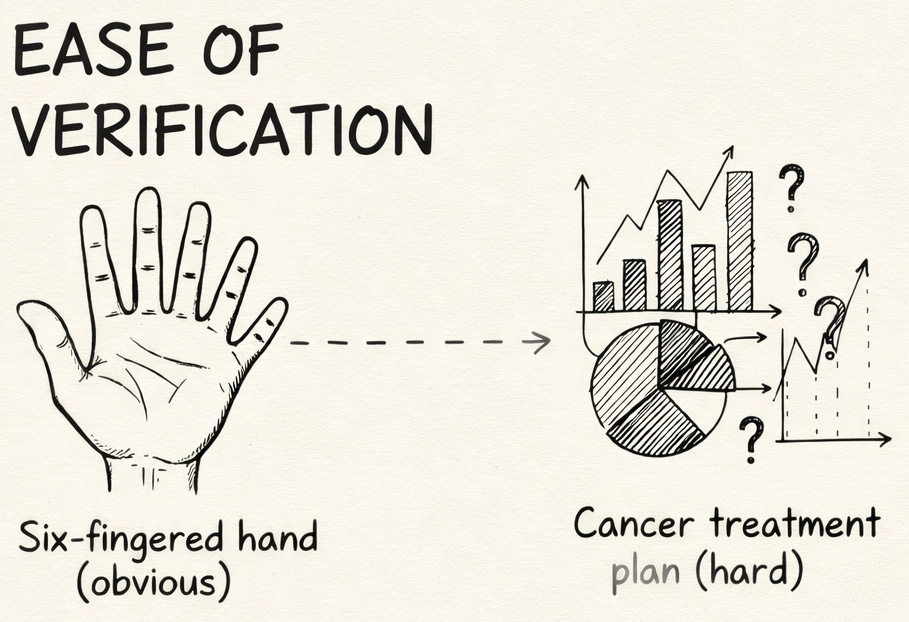

Verification Speed

If a task falls outside high-fidelity simulation and isn’t quickly reversible, that leaves us with human verification of AI outputs.

A skilled graphic designer can request an AI to swap out a portion of an image. Upon reviewing the output, the designer points out, “The hand has six fingers. Fix it.” Verification is fast because the work is easily observable.

Other tasks are inherently harder to verify. For cancer treatment plans, large-scale data engineering, and complex legal analysis, even experts struggle to evaluate quality at a glance. At a certain point, it takes nearly as long to verify and correct the AI’s work as it does to simply do the task yourself. Here, AI may be most useful as a collaborative suggestion engine (a built-in copilot) rather than a primary executor.

The faster the verification speed, the faster AI permeates that type of work.

Digital Centricity

In August 2015, my friend Rhett bet me that autonomous vehicles would be ubiquitous across the USA within two years. Having done a deep dive in the sector recently, I bet scaled rollout across most major US metropolitan areas would occur by the end of 2025. I still undershot. We both lost.

After 11 years since that bet, autonomous vehicles are… kind of here. Even though they operate in more controlled road environments, it’s a challenging problem to tackle. However, navigating a busy city street on foot is even more challenging, and is far more chaotic than anything in cyberspace. Real-world complexity, combined with safety concerns and regulation, makes physically manipulating objects a far harder challenge than most assume.

The more value a job generates from physical-world interactions, the less AI will affect it. The HVAC technician’s hands-on work is safe. Their marketing, scheduling, accounting, and customer feedback workflows are not.

Software Engineering Catapults into AI-First

Now that we understand how and why AI will take on white-collar work generally, you’ll notice that along every dimension, software engineering is ideal for AI disruption.

We are being catapulted to a new land of AI-first, but we do get to choose which way we face when launched.

The right questions are:

How reliably can our team transform intent into working systems, receive feedback, and iterate?

How can AI increase the velocity at which that occurs?

The first question is our performance North Star, while the second question is how we will navigate there.

Let’s begin with a wrong answer. Even in software engineering, there are no AI-shaped holes waiting to be filled. We cannot simply sprinkle “AI dust” over legacy workflows and codebases and expect a step-change in productivity.

The right answer is building an AI-first philosophy that treats AI as a primary user of your systems, and not just a tool someone occasionally consults. We call this the User Agnosticism Tenet: products, documentation, and processes should be designed for ease of use by humans, AIs, or a combination of both. When you embrace this, a codebase becomes legible and adaptable to both the agents doing the day-to-day and the people managing them.

This transformation requires shifts along three axes. The industry is swiftly converging on this framework independently. When many teams converge upon the same “new discovery” completely independently, we consider it additional evidence that the direction is sound.

The Infrastructure (Sandbox)

We must first recognize that code does not leap out of a vacuum and execute in isolation. For an AI to be an effective collaborator, it must understand the “room” it is operating in. This means providing the AI with a cloud infrastructure, security protocols, authorization systems, and testing environments available to it.

An AI-first workspace ensures that the agent knows exactly what resources are at its disposal and, crucially, how to verify its own work. If the AI cannot autonomously spin up a container or run a battery of integration tests to ensure its contribution is stable, it will forever remain a “suggestion engine” and second-class contributor. The infrastructure must be architected so that the AI can navigate the path from “code written” to “code running” with the same level of environmental awareness as a senior systems engineer.

The Codebase (Self-Awareness)

In an AI-first world, teams must invest in creating a navigable map of each codebase. The AI requires signposts and documentation to progressively disclose where it should be working and how it should be working. The goal here is legibility.

In practice, this means embedding lightweight guide files (called agents.md and similar files) throughout the codebase that points AI agents toward correct modules, pull request standards, and known gotchas. More importantly, the hidden assumptions and user experience intent that once lived only in developers’ heads must become explicit, and embedded directly in the code. (Note: At the moment, you can’t shortcut and just have AI spin them all up for you. It doesn’t work.)

The goal here is progressive disclosure: the system should provide the right amount of context exactly when it’s needed. When an AI modifies a feature, it should understand both the how and the why. The implicit becomes explicit, and the explicit is surfaced exactly when it’s needed.

The Issues (Communication)

Even the most sophisticated model will fail if it is fed “slop.” This is the ultimate GIGO (Garbage In, Garbage Out) problem. Telling an AI to “fix the bug where the user sometimes gets an error” and then blaming the model is a flagrant skill issue. If the models improve, but quality and velocity doesn’t, we are obviously the bottleneck.

“The fault, dear Brutus, is not in our stars (or our AI models), but in ourselves.”

— Cassius to Brutus, Julius Caesar (mostly)

The “Issue” or work ticket becomes the primary interface for execution. Writing an excellent one requires discipline. If our North Star is, “How reliably can our team transform intent into working systems?” then we need to clarify intent into precise guidance.

We use AI agents that combine codebase context with user intent to workshop issues collaboratively until the issue is refined and sufficiently precise for the AI to successfully tackle the issue independently.

We now have created a virtuous cycle. If an AI can’t solve a well-defined issue, that’s a diagnostic signal: either the codebase is too brittle, or the issue writer needs to sharpen their thinking. Treating issue writing as an AI-enhanced craft trains product managers to translate intent into execution and trains engineers to care about user understanding.

The Result and Next Steps

AI assists you to communicate precisely to the AI system. The system’s own documentation knows both how to push you for further details and execute once the instructions are finalized. The system then simulates and validates the results before implementation.

That’s the new world of software engineering we have been thrown into, but it is beginning to transform human resources, medicine, finance, law, marketing, accounting, etc. etc. Each field will follow a similar arc of Communication, Self-Awareness, and Sandbox. The pace of that change is determined by:

Fidelity to simulation

Reversibility + feedback velocity

Verification speed

Digital centricity

So, what do you do?

First: Recognize that the value of many types of expertise has gone up and not down. Depth of expertise rather than years of experience is what to look for and develop, both in yourself and across your teams. Someone with two years of experience, but both of those years are focused within one core dimension, is more impactful than the 10 year generalist. Why?

Communication and Design: Experts know how to design the process and communicate the nuanced jargon that can command the AI in specific ways.

Evaluation: Experts know what good looks like and can provide high value feedback.

Second: Know where AI disruption will strike first in your domain. In software engineering, AI lags in infrastructure and data engineering but excels in traditional front and back end tasks. Map your equivalent.

Third: Get comfortable operating inside the new flow personally. It’s uncomfortable. Do it anyway on routine tasks until it’s not. You cannot assess the future state without living in it.

Fourth: Begin adapting the organization. Jobs are bundles of tasks. Teams are bundles of jobs. Organizations are bundles of teams and leaders. When the fundamental tasks change, it ripples upward. Growth and structural change will be required, incorporating, among other items, the key points laid out here.

We’re catapulting forward, but you can choose your landing.

Looking for practical guidance and frameworks to transform organizations and technologies with AI? Architected Intelligence is now available for pre-order!

"It’s uncomfortable. Do it anyway on routine tasks until it’s not. You cannot assess the future state without living in it."

Love this quote. So important to dive in if you want to learn it and adapt.