What is an AI Engineer?

Think like a data scientist, build like a software engineer

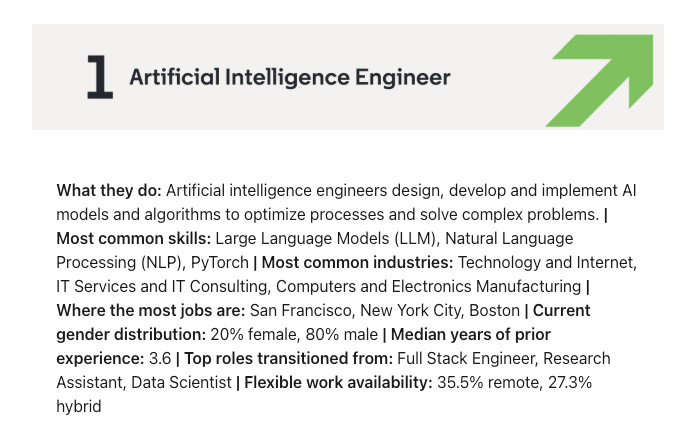

A few years ago, I made the decision to change my title to AI Engineer. At the time, it was a decision that carried risk. Other new and trendy titles like “Prompt Engineer” were in vogue. I felt strongly that AI engineer was the descriptor that was meant for me, and time has proved me right. Prompt Engineer got laughed out as a legitimate title, but AI Engineer has managed to cement itself as a new fixture in companies. LinkedIn’s 2025 Jobs on the Rise report named Artificial Intelligence Engineer as the #1 fastest growing job position.

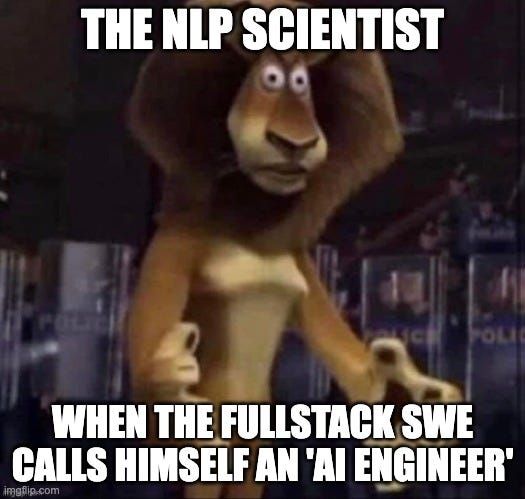

But what is an AI Engineer? The job report describes it as someone who will “design, develop and implement AI models and algorithms to optimize processes and solve complex problems.” Here’s the problem with that statement. Designing + developing an AI model versus implementing it to solve business problems are two completely different jobs! One of those individuals makes $3 million a year at Anthropic to design the next Claude model, and the other is on the frontlines solving business problems using generative AI as their primary tool of choice. This conflation of roles has caused many folks on the internet to crash out, decrying how “Back in my day, AI engineers actually knew how AI models worked”. And that is a fair point. Most of the roles you see open for AI engineers have skill requirements that look suspiciously like software engineers.

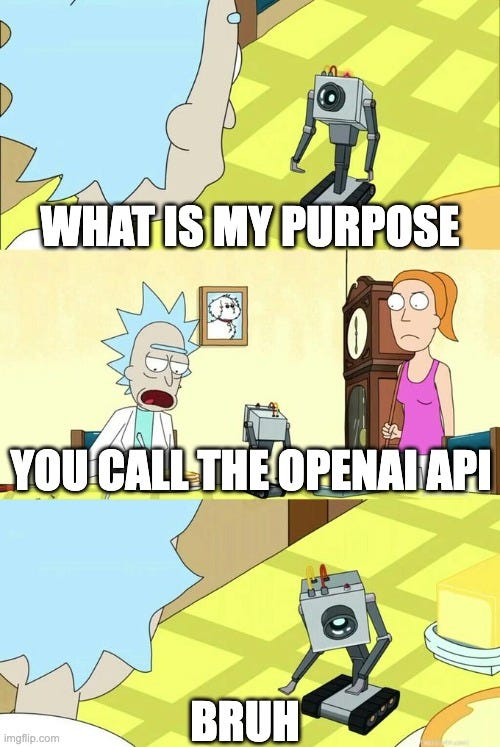

So I want to put my hot take out there: People who build AI models are NOT AI engineers. Call them anything else: ML engineers, data scientists, AI researchers, NLP specialists, MLOps engineers, LLM Ops engineers, LLM engineers, etc. But most AI engineer positions you see open on LinkedIn are not asking you to build an AI model, or even tinker with one. Why? Because 99% of companies believe they can gain more ROI from leveraging existing foundation models rather than hiring experts that actually know how to build and fine-tune LLMs. The assumption is that the foundation models from the Big Three (OpenAI, Anthropic, and Google)1 are so good that there is no point in trying to compete. It’s easier to just plug into what they have and focus on context engineering.

So if AI engineers are not defined by building AI models, what does define them? Is it just calling the OpenAI API? The role of an AI engineer is defined in my mind with the following statement:

Think like a data scientist,

build like a software engineer

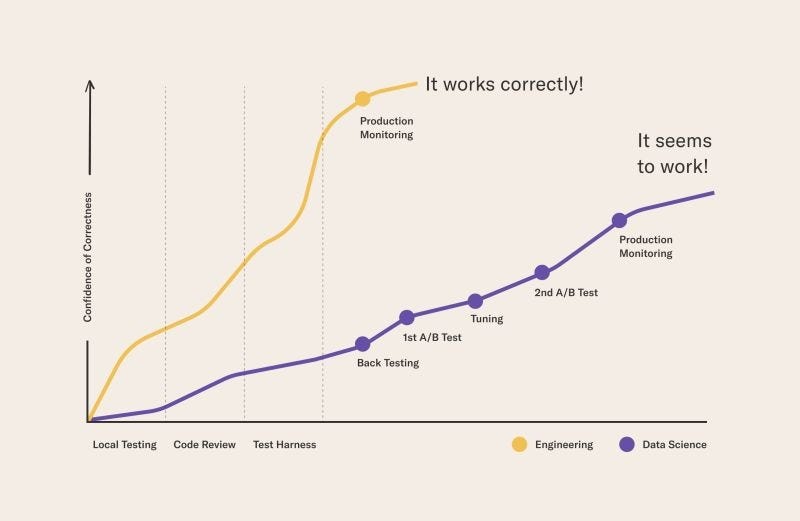

Much of the day to day work of an AI engineer looks suspiciously like a software engineer. You are writing application code or backend services that support applications. These are hard jobs, but they aren’t new. The key difference between the AI engineer and the software engineer is that non-deterministic generative AI is a critical component in an AI engineer’s applications and platforms. Why is this significant? Let’s look at the difference between software engineers and data scientists. Software engineers can feel confident that if it works in production on day one, it will likely work in production on day 20. Data scientists do not have that same luxury. The below graph that I shamelessly stole from the internet (thanks Eric) illustrates this difference in confidence.

AI engineers straddle both disciplines. And that’s what makes it an interesting job. You need to know BOTH sides of the coin.

On the software engineer side, you need to:

Build scalable infrastructure with IaC and CI/CD

Create codebases that can handle dozens or hundreds of developers working simultaneously

Navigate micro-service cross-service chaos with modern observability practices, attention to security, and thoughtful API design

Provide real value to users (the most simple but oft overlooked skill)

On the data science side, you need to:

Handle non-deterministic behavior

Use benchmarks and A/B testing

Identify trade-offs between various approaches

Understand how AI and data combine to solve previously impossible problems

At the intersection of both of these are skills like:

Knowing when AI is actually a subpar solution that could be replaced with deterministic code logic

Staying on top of an avalanche of tech updates that are obscured by marketing and internet hype bros

Evaluating when to build vs self-host vs buy, and identifying when to re-evaluate and change those decisions in a light-speed industry that constantly evolves

Diffusing AI knowledge across the broader organization

So to repeat: AI engineers blend the skillset and daily activities of a software engineer with the mindset and caution of a data scientist. They focus on building services that leverage generative AI, understanding that they need to follow the best practices of building production worthy applications while also applying the rigorous standards of testing in the field of data science. While not every job posting looking for an AI engineer will actually match what I’ve described, this is the standard I believe it’s solidifying around, and what every AI engineer should strive for.

Quick plug: A lot of the things an AI engineer needs to know are outlined in the book I just wrote with Jacob Miller, Architected Intelligence. We directly address the skill gap that AI engineers have as they get pulled in from either the software engineering or data science side.

The bottom line: Think like a data scientist, build like a software engineer. That is the role and purpose of an AI engineer. Starting with that mantra will create a powerful foundation that will pay dividends moving forward.

And yes, there are great open source Chinese models as well, at least today. Addressing the differences between closed source American models and various benefits included with them (such as built in web search, structured output, and code execution environments) versus self-hosting open source models from Chinese firms that we hope continue to charitably give away multi-million dollar inventions for free is a debate for a different day.